If seeing is believing, then feeling has got to be downright convincing. But feeling something that really isn’t there? Ultrahaptics brings the long-neglected sense of touch to the virtual world. And now you can add this to your own products and customer experiences.

Anyone taking in Disneyland’s 1994 3D attraction, Honey, I Shrunk the Audience, was treated to a glimpse of the future of virtual reality. The film was synchronized with several special effects built into the theater itself, including air jets mounted beneath the seats that pulsed shots of air right onto the ankles of the audience members, quite realistically simulating the feel of scurrying mice as they were virtually let loose in the theater. I still remember the screams of surprise.

Things have come a long way since then. Air jets and vortices have given way to a new generation technologies, including ultrasound, which enables highly nuanced tactile effects —haptics—and now promises to revolutionize user experiences in Augmented and Virtual Reality.

Ultrahaptics’ technology uses ultrasound to generate a haptic response directly onto the user’s bare hands. Gesture controls can be used to operate infotainment systems, such as in-car audio and connected-car applications, more intuitively.

Haptics is the science of touch. Ultrahaptics, the company, is taking haptics to a whole new realm, creating that sense of touch in midair. The applications of touch are as limitless as sight and sound: think virtual force fields, touchless dials complete with the clicking feel of detents, holographic buttons, sliders, and switches with which you can control a myriad of devices — your music, thermostat, lighting, your car’s infotainment system . . . pretty much anything. You can now interact in a natural, intuitive way with any virtual object.

How will the driving of the future look? Bosch presented its vision at CES 2017 with a new concept car. Alongside home and work, connectivity is turning the car into the third living space. The concept car includes gesture control with haptic feedback. Developed with Ultrahaptics, the technology uses ultrasound sensors that sense whether the driver’s hand is in the correct place, and then provides feedback on the gesture being executed.

Founded in 2013, Ultrahaptics grew out of research conducted by Tom Carter as a student at the University of Bristol in the UK. There he worked under the supervision of computer science professor Sriram Subramanian, who ran a lab devoted to improving human-computer interaction. Subramanian, who has since moved to the University of Sussex, had long been intrigued by the possibilities of haptic technologies, but hadn’t brought them to fruition for want of solving the complex programming challenges. That’s where Carter comes in.

With the fundamental programming problems solved, the company’s solution works by generating focused points of acoustic radiation force—a force generated when the ultrasound is reflected onto the skin—in the air over a display surface or device. Beneath that surface lies a phased array of ultrasonic emitters (essentially tiny ultrasound speakers), which produce steerable focal points of ultrasonic energy with sufficient sound pressure to be felt by the skin.

Using proprietary signal processing algorithms, the array of ultrasonic speakers or “transducers” generates the focal points at a frequency of 40kHz. The 40kHz frequency is then modulated at a lower frequency within the perceptual range of feeling in order to allow the user to feel the desired haptic sensation. Ultrahaptics typically uses a frequency from 1–300Hz, corresponding to the peak sensitivity of the tactile receptors. Modulation of the frequency is one of the parameters that can be adjusted by the API to create different sensations. The location of the focal point, determined by its three-dimensional coordinates (x, y, z), is programmed through the system’s API.

Using proprietary signal processing algorithms, the array of ultrasonic speakers or “transducers” generates the focal points at a frequency of 40kHz. The 40kHz frequency is then modulated at a lower frequency within the perceptual range of feeling in order to allow the user to feel the desired haptic sensation. Ultrahaptics typically uses a frequency from 1–300Hz, corresponding to the peak sensitivity of the tactile receptors. Modulation of the frequency is one of the parameters that can be adjusted by the API to create different sensations. The location of the focal point, determined by its three-dimensional coordinates (x, y, z), is programmed through the system’s API.

Beyond creating a superior computer-human interaction with more intuitive, natural user experiences, the technology is also finding applications in use cases spanning hygiene (don’t touch that dirty thing), to accessibility (enabling the deaf and blind), and creating safer driving experiences. Really, the possibilities are endless: if you can control an electronic device by touch, chances are you can go touchless with haptics.

A Sound Foundation

Ultrasound, in terms of its physical properties, is nothing more than an extension of the audio frequencies that lie beyond the range of human hearing, which generally cut off at about 20kHz. As such, ultrasound devices operate with frequencies from 20kHz on up to several gigahertz. Ultrahaptics settled on a carrier frequency of 40kHz for its system.

Not only can humans not hear anything above 20kHz, we can’t feel them, either. The receptors within human skin can only detect changes in intensity of the ultrasound. The 40kHz ultrasound frequency must therefore be modulated at far lower frequencies that lie within the perceptual range of feeling, which turns out to be a fairly narrow band of about 1-400Hz.

As to how we feel ultrasound, haptic sensation is the result of the acoustic radiation force that is generated when ultrasound is reflected. When the ultrasound wave is focused onto the surface of the skin, it induces a shear wave in the skin tissue. This in turn triggers mechanoreceptors within the skin, generating the haptic impression. (Concern over absorption of ultrasound is mitigated by the fact that 99.9% of the pressure waves are fully reflected away from the soft tissue.)

Feeling is Believing

Ultrahaptics’ secret sauce, as you might imagine, lies in its algorithms, which dynamically define focal points by selectively controlling the respective intensities of each individual transducer to create fine haptic resolutions, resolving gesture-controlled actions with fingertip accuracy.

When several transducers are focused constructively on a single point—a point being defined by its x, y, z coordinates—the acoustic pressure increases to as much as 250 pascals, which is more than sufficient to generate tactile sensations. The focal points are then isolated by the generation of null control points everywhere else. That is, the system outputs the lowest intensity ultrasound level at the locations surrounding the focal point. In the algorithm’s final step, the phased delay and amplitude are calculated for each transducer in the array to create an acoustic field that matches the control point, the effect being that ultrasound is defocused everywhere in the field above or below that controlled focus point.

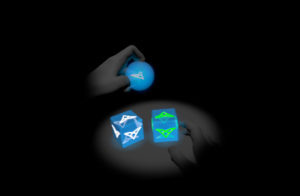

The modulated ultrasound waves, which are precisely controlled, are transmitted from an array of transducers such that the resulting interference pattern creates focal points in midair, as indicated by the green dot in the video, above.

Things get more interesting when modulating different focal points at different frequencies to give each individual point of feedback its own independent “feel.” In this way the system is not only able to correlate haptic and visual feedback, but a complete solution can attach meaning to noticeably different textures so that information can be transferred to the user via the haptic feedback.

The API gives the ability to generate a range of different sensations, including:

- Force fields: a use case for this could include utilization in domestic appliances, for example a system warning that a user is about to put their hand on a hob that is not completely cool.

- Haptic buttons, dials and switches: these are particularly interesting in the automotive industry, where infotainment controls, for example, can be designed to be projected onto a user’s hand without the driver having to look at the dashboard.

- Volumetric haptic shapes: in a world where virtual and augmented reality could become part of our everyday lives, one of the missing pieces of the puzzle is the ability to feel things in a virtual world. Ultrahaptics’ technology can generate different shapes, giving a haptic resistance when users, immersed in a virtual world, are expecting to feel an object.

- Bubbles, raindrops and lightning: the range of sensations that can be generated is vast; it can range from a “solid” shape to a sensation such as raindrops or virtual spiders. As well as being of interest to the VR gaming community this is also something that will be extremely interesting for location-based entertainment.

These sensations are generated by modulation of the frequency and the wavelength of the ultrasound, and these options are some of several parameters that can be adjusted by the API to create different sensations. The location of the focal point, determined by its three-dimensional coordinates, is also programmed via the system’s API.

Gesture Tracking gets Touchy

Gesture control, of course, requires a gesture tracking sensor/controller—for example the Leap Motion offering, which Ultrahaptics has integrated into its development and evaluation system. The controller determines the precise position of a user’s hands—and fingers—relative to the display surface (or hologram, as the case may be). Stereo cameras operating with infrared, and augmented to measure depth, provide high-accuracy 3D spatial representation of gestures that “manipulate” the active haptic field. The system can use any camera/sensor; the key is its ability to reference the x, y, z coordinates through the Ultrahaptics API.

In the Interest of Transparency

Another key component of a haptic feedback system is the medium over which the user interacts—in this case, a projected display screen or device, beneath which is the transducer array. The chief characteristic of the display surface/device is its degree of acoustic transparency: the display surface must allow ultrasound waves to pass through without defocusing and with minimum attenuation. The ideal display would therefore be totally acoustically transparent.

The acoustics experts at Ultrahaptics have found that a display surface perforated with 0.5mm holes and 25% open space reduces the impact on the focusing algorithm, while still maintaining a viable projection surface. In time, we may see acoustic metamaterials come into play. By artificially creating a lattice structure within a material, it is possible to correct for the refraction that occurs as the wave passes through the material. This would enable the creation of a solid material that permits a selected frequency of sound to pass through it. A pane of glass manufactured with this technique would provide the perfect display surface. It has also been shown that such a material could enhance the focusing of the ultrasound by acting as an acoustic lens. But, again, we’ll have to wait for this; acoustic metamaterial-based solutions are only beginning to emerge. In the meantime, surface materials that perform well include woven fabrics, such as those that would be used with speakers; hydrophobic acoustic materials, including the range from Saati Acoustex, which also protect from dust and liquids; and perforated metal sheets.

Generating 3D Shapes

Ultrahaptics’ system is not limited to points, lines or planes; it can actually create full 3D shapes—shapes you can reach out to touch and feel such as spheres, pyramids, prisms and cubes. The shapes are generated with a number of focal points projected in a (x, y, z) position, and move as their position is updated at the chosen refresh rate. Ultrahaptics continues to investigate this area to push the boundaries of what can be achieved.

Ultrahaptics’ system is not limited to points, lines or planes; it can actually create full 3D shapes—shapes you can reach out to touch and feel such as spheres, pyramids, prisms and cubes. The shapes are generated with a number of focal points projected in a (x, y, z) position, and move as their position is updated at the chosen refresh rate. Ultrahaptics continues to investigate this area to push the boundaries of what can be achieved.

Evaluation & Development Program

For developers looking to experiment and create advanced prototypes with 3D objects or AR/VR haptic feedback, the Ultrahaptics Evaluation Programme includes a development kit (UHEV1) providing all the hardware peripherals (transducer array, driver board, Leap Motion gesture controller) and software, as well as technical support to generate custom sensations. The square form factor transducer platform comprises an array of 16×16 transducers—a total of 256 ultrasound speakers—driven by the system’s controller board, a system architecture consisting of an XMOS processor for controlling the transducers. The evaluation kit offers developers a plug and play solution that will work in conjunction with any computer that operates Windows 8 or above, or OSX 10.9 or above. In short, everything you need to implement Ultrahaptics in your product development cycle.

The UHDK5 TOUCH development kit is available through Ultrahaptics distributor EBV and includes a transducer array board, gesture tracking sensor, software suite and fully embedded system architecture (microprocessor and FPGA). The Sensation Editor library includes a range of sensations that can be configured to individual design requirements as well as enabling the development of tailored sensations using the software suite provided.